2025

Jonske, Enrico Nasca Moon Kim Frederic

Why does my medical AI look at pictures of birds? Exploring the efficacy of transfer learning across domain boundaries Journal Article

In: Computer Methods and Programs in Biomedicine, 2025.

Links | BibTeX | Tags: deep learning, fine-tuning, foundation models, Medical AI

@article{jonske2025why,

title = {Why does my medical AI look at pictures of birds? Exploring the efficacy of transfer learning across domain boundaries},

author = {Enrico Nasca Moon Kim Frederic Jonske},

url = {https://michaelkamp.org/wp-content/uploads/2025/03/jonske_whydoesmymedicalAIlookatpicturesofbirds.pdf},

year = {2025},

date = {2025-01-31},

urldate = {2025-01-31},

journal = {Computer Methods and Programs in Biomedicine},

publisher = {Elsevier},

keywords = {deep learning, fine-tuning, foundation models, Medical AI},

pubstate = {published},

tppubtype = {article}

}

2024

Singh, Sidak Pal; Adilova, Linara; Kamp, Michael; Fischer, Asja; Schölkopf, Bernhard; Hofmann, Thomas

Landscaping Linear Mode Connectivity Proceedings Article

In: ICML Workshop on High-dimensional Learning Dynamics: The Emergence of Structure and Reasoning, 2024.

BibTeX | Tags: deep learning, linear mode connectivity, theory of deep learning

@inproceedings{singh2024landscaping,

title = {Landscaping Linear Mode Connectivity},

author = {Sidak Pal Singh and Linara Adilova and Michael Kamp and Asja Fischer and Bernhard Schölkopf and Thomas Hofmann},

year = {2024},

date = {2024-09-01},

urldate = {2024-09-01},

booktitle = {ICML Workshop on High-dimensional Learning Dynamics: The Emergence of Structure and Reasoning},

keywords = {deep learning, linear mode connectivity, theory of deep learning},

pubstate = {published},

tppubtype = {inproceedings}

}

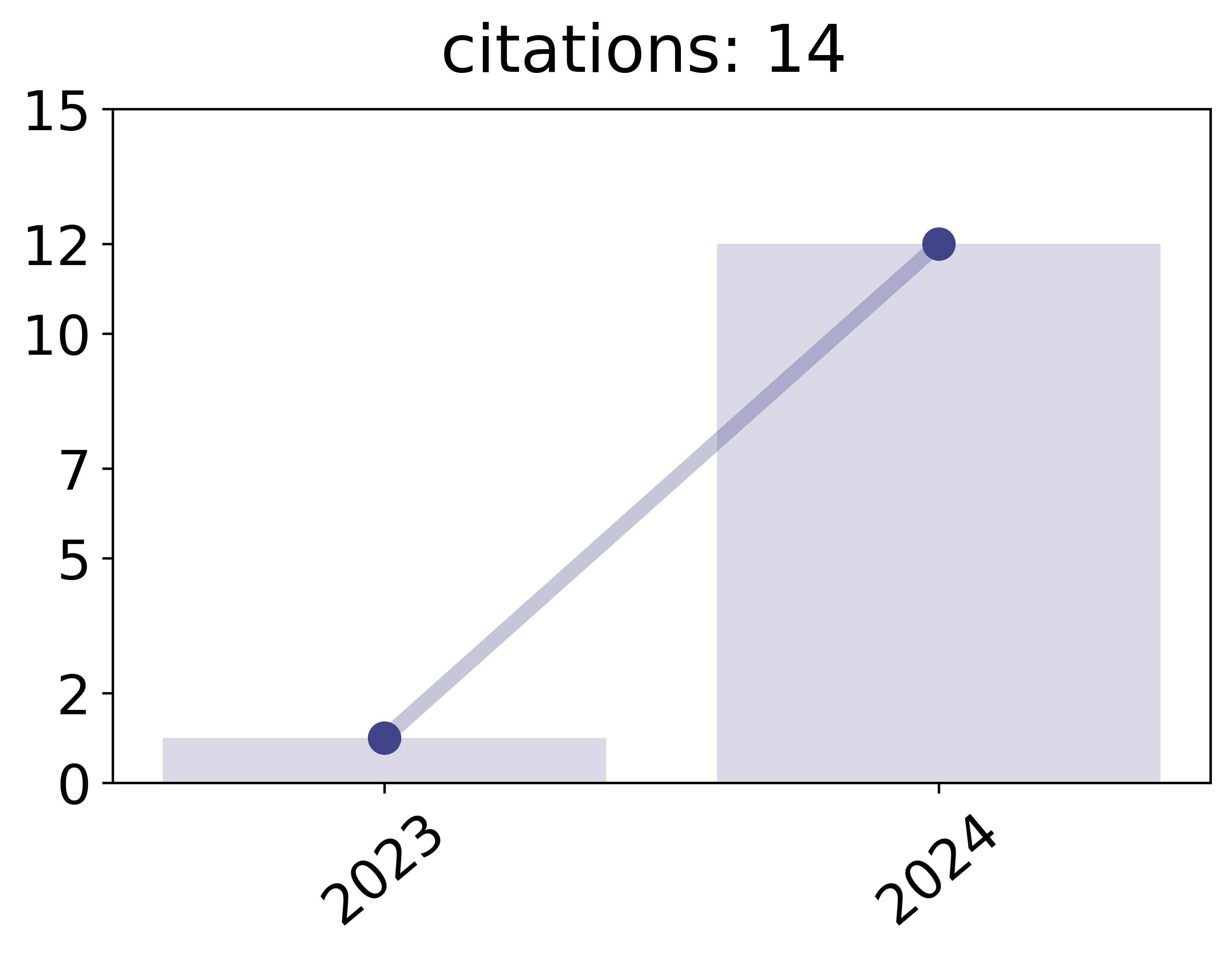

Adilova, Linara; Andriushchenko, Maksym; Fischer, Michael Kamp Asja; Jaggi, Martin

Layer-wise Linear Mode Connectivity Proceedings Article

In: International Conference on Learning Representations (ICLR), Curran Associates, Inc, 2024.

Abstract | Links | BibTeX | Tags: deep learning, layer-wise, linear mode connectivity

@inproceedings{adilova2024layerwise,

title = {Layer-wise Linear Mode Connectivity},

author = {Linara Adilova and Maksym Andriushchenko and Michael Kamp Asja Fischer and Martin Jaggi},

url = {https://openreview.net/pdf?id=LfmZh91tDI},

year = {2024},

date = {2024-05-07},

urldate = {2024-05-07},

booktitle = {International Conference on Learning Representations (ICLR)},

publisher = {Curran Associates, Inc},

abstract = {Averaging neural network parameters is an intuitive method for fusing the knowledge of two independent models. It is most prominently used in federated learning. If models are averaged at the end of training, this can only lead to a good performing model if the loss surface of interest is very particular, i.e., the loss in the exact middle between the two models needs to be sufficiently low. This is impossible to guarantee for the non-convex losses of state-of-the-art networks. For averaging models trained on vastly different datasets, it was proposed to average only the parameters of particular layers or combinations of layers, resulting in better performing models. To get a better understanding of the effect of layer-wise averaging, we analyse the performance of the models that result from averaging single layers, or groups of layers. Based on our empirical and theoretical investigation, we introduce a novel notion of the layer-wise linear connectivity, and show that deep networks do not have layer-wise barriers between them. We analyze additionally the layer-wise personalization averaging and conjecture that in particular problem setup all the partial aggregations result in the approximately same performance.},

keywords = {deep learning, layer-wise, linear mode connectivity},

pubstate = {published},

tppubtype = {inproceedings}

}

2023

Adilova, Linara; Abourayya, Amr; Li, Jianning; Dada, Amin; Petzka, Henning; Egger, Jan; Kleesiek, Jens; Kamp, Michael

FAM: Relative Flatness Aware Minimization Proceedings Article

In: Proceedings of the ICML Workshop on Topology, Algebra, and Geometry in Machine Learning (TAG-ML), 2023.

Links | BibTeX | Tags: deep learning, flatness, generalization, machine learning, relative flatness, theory of deep learning

@inproceedings{adilova2023fam,

title = {FAM: Relative Flatness Aware Minimization},

author = {Linara Adilova and Amr Abourayya and Jianning Li and Amin Dada and Henning Petzka and Jan Egger and Jens Kleesiek and Michael Kamp},

url = {https://michaelkamp.org/wp-content/uploads/2023/06/fam_regularization.pdf},

year = {2023},

date = {2023-07-22},

urldate = {2023-07-22},

booktitle = {Proceedings of the ICML Workshop on Topology, Algebra, and Geometry in Machine Learning (TAG-ML)},

keywords = {deep learning, flatness, generalization, machine learning, relative flatness, theory of deep learning},

pubstate = {published},

tppubtype = {inproceedings}

}

2021

Petzka, Henning; Kamp, Michael; Adilova, Linara; Sminchisescu, Cristian; Boley, Mario

Relative Flatness and Generalization Proceedings Article

In: Advances in Neural Information Processing Systems, Curran Associates, Inc., 2021.

Abstract | BibTeX | Tags: deep learning, flatness, generalization, Hessian, learning theory, relative flatness, theory of deep learning

@inproceedings{petzka2021relative,

title = {Relative Flatness and Generalization},

author = {Henning Petzka and Michael Kamp and Linara Adilova and Cristian Sminchisescu and Mario Boley},

year = {2021},

date = {2021-12-07},

urldate = {2021-12-07},

booktitle = {Advances in Neural Information Processing Systems},

publisher = {Curran Associates, Inc.},

abstract = {Flatness of the loss curve is conjectured to be connected to the generalization ability of machine learning models, in particular neural networks. While it has been empirically observed that flatness measures consistently correlate strongly with generalization, it is still an open theoretical problem why and under which circumstances flatness is connected to generalization, in particular in light of reparameterizations that change certain flatness measures but leave generalization unchanged. We investigate the connection between flatness and generalization by relating it to the interpolation from representative data, deriving notions of representativeness, and feature robustness. The notions allow us to rigorously connect flatness and generalization and to identify conditions under which the connection holds. Moreover, they give rise to a novel, but natural relative flatness measure that correlates strongly with generalization, simplifies to ridge regression for ordinary least squares, and solves the reparameterization issue.},

keywords = {deep learning, flatness, generalization, Hessian, learning theory, relative flatness, theory of deep learning},

pubstate = {published},

tppubtype = {inproceedings}

}

Li, Xiaoxiao; Jiang, Meirui; Zhang, Xiaofei; Kamp, Michael; Dou, Qi

FedBN: Federated Learning on Non-IID Features via Local Batch Normalization Proceedings Article

In: Proceedings of the 9th International Conference on Learning Representations (ICLR), 2021.

Abstract | Links | BibTeX | Tags: batch normalization, black-box parallelization, deep learning, federated learning

@inproceedings{li2021fedbn,

title = {FedBN: Federated Learning on Non-IID Features via Local Batch Normalization},

author = {Xiaoxiao Li and Meirui Jiang and Xiaofei Zhang and Michael Kamp and Qi Dou},

url = {https://michaelkamp.org/wp-content/uploads/2021/05/fedbn_federated_learning_on_non_iid_features_via_local_batch_normalization.pdf

https://michaelkamp.org/wp-content/uploads/2021/05/FedBN_appendix.pdf},

year = {2021},

date = {2021-05-03},

urldate = {2021-05-03},

booktitle = {Proceedings of the 9th International Conference on Learning Representations (ICLR)},

abstract = {The emerging paradigm of federated learning (FL) strives to enable collaborative training of deep models on the network edge without centrally aggregating raw data and hence improving data privacy. In most cases, the assumption of independent and identically distributed samples across local clients does not hold for federated learning setups. Under this setting, neural network training performance may vary significantly according to the data distribution and even hurt training convergence. Most of the previous work has focused on a difference in the distribution of labels or client shifts. Unlike those settings, we address an important problem of FL, e.g., different scanners/sensors in medical imaging, different scenery distribution in autonomous driving (highway vs. city), where local clients store examples with different distributions compared to other clients, which we denote as feature shift non-iid. In this work, we propose an effective method that uses local batch normalization to alleviate the feature shift before averaging models. The resulting scheme, called FedBN, outperforms both classical FedAvg, as well as the state-of-the-art for non-iid data (FedProx) on our extensive experiments. These empirical results are supported by a convergence analysis that shows in a simplified setting that FedBN has a faster convergence rate than FedAvg. Code is available at https://github.com/med-air/FedBN.},

keywords = {batch normalization, black-box parallelization, deep learning, federated learning},

pubstate = {published},

tppubtype = {inproceedings}

}

2020

Petzka, Henning; Adilova, Linara; Kamp, Michael; Sminchisescu, Cristian

Feature-Robustness, Flatness and Generalization Error for Deep Neural Networks Workshop

2020.

Links | BibTeX | Tags: deep learning, flatness, generalization, learning theory, loss surface, neural networks, robustness

@workshop{petzka2020feature,

title = {Feature-Robustness, Flatness and Generalization Error for Deep Neural Networks},

author = {Henning Petzka and Linara Adilova and Michael Kamp and Cristian Sminchisescu},

url = {http://michaelkamp.org/wp-content/uploads/2020/01/flatnessFeatureRobustnessGeneralization.pdf},

year = {2020},

date = {2020-01-01},

urldate = {2020-01-01},

journal = {arXiv preprint arXiv:2001.00939},

keywords = {deep learning, flatness, generalization, learning theory, loss surface, neural networks, robustness},

pubstate = {published},

tppubtype = {workshop}

}

2019

Kamp, Michael

Black-Box Parallelization for Machine Learning PhD Thesis

Universitäts-und Landesbibliothek Bonn, 2019.

Abstract | Links | BibTeX | Tags: averaging, black-box, communication-efficient, convex optimization, deep learning, distributed, dynamic averaging, federated, learning theory, machine learning, parallelization, privacy, radon machine

@phdthesis{kamp2019black,

title = {Black-Box Parallelization for Machine Learning},

author = {Michael Kamp},

url = {https://d-nb.info/1200020057/34},

year = {2019},

date = {2019-01-01},

urldate = {2019-01-01},

school = {Universitäts-und Landesbibliothek Bonn},

abstract = {The landscape of machine learning applications is changing rapidly: large centralized datasets are replaced by high volume, high velocity data streams generated by a vast number of geographically distributed, loosely connected devices, such as mobile phones, smart sensors, autonomous vehicles or industrial machines. Current learning approaches centralize the data and process it in parallel in a cluster or computing center. This has three major disadvantages: (i) it does not scale well with the number of data-generating devices since their growth exceeds that of computing centers, (ii) the communication costs for centralizing the data are prohibitive in many applications, and (iii) it requires sharing potentially privacy-sensitive data. Pushing computation towards the data-generating devices alleviates these problems and allows to employ their otherwise unused computing power. However, current parallel learning approaches are designed for tightly integrated systems with low latency and high bandwidth, not for loosely connected distributed devices. Therefore, I propose a new paradigm for parallelization that treats the learning algorithm as a black box, training local models on distributed devices and aggregating them into a single strong one. Since this requires only exchanging models instead of actual data, the approach is highly scalable, communication-efficient, and privacy-preserving.

Following this paradigm, this thesis develops black-box parallelizations for two broad classes of learning algorithms. One approach can be applied to incremental learning algorithms, i.e., those that improve a model in iterations. Based on the utility of aggregations it schedules communication dynamically, adapting it to the hardness of the learning problem. In practice, this leads to a reduction in communication by orders of magnitude. It is analyzed for (i) online learning, in particular in the context of in-stream learning, which allows to guarantee optimal regret and for (ii) batch learning based on empirical risk minimization where optimal convergence can be guaranteed. The other approach is applicable to non-incremental algorithms as well. It uses a novel aggregation method based on the Radon point that allows to achieve provably high model quality with only a single aggregation. This is achieved in polylogarithmic runtime on quasi-polynomially many processors. This relates parallel machine learning to Nick’s class of parallel decision problems and is a step towards answering a fundamental open problem about the abilities and limitations of efficient parallel learning algorithms. An empirical study on real distributed systems confirms the potential of the approaches in realistic application scenarios.},

keywords = {averaging, black-box, communication-efficient, convex optimization, deep learning, distributed, dynamic averaging, federated, learning theory, machine learning, parallelization, privacy, radon machine},

pubstate = {published},

tppubtype = {phdthesis}

}

Following this paradigm, this thesis develops black-box parallelizations for two broad classes of learning algorithms. One approach can be applied to incremental learning algorithms, i.e., those that improve a model in iterations. Based on the utility of aggregations it schedules communication dynamically, adapting it to the hardness of the learning problem. In practice, this leads to a reduction in communication by orders of magnitude. It is analyzed for (i) online learning, in particular in the context of in-stream learning, which allows to guarantee optimal regret and for (ii) batch learning based on empirical risk minimization where optimal convergence can be guaranteed. The other approach is applicable to non-incremental algorithms as well. It uses a novel aggregation method based on the Radon point that allows to achieve provably high model quality with only a single aggregation. This is achieved in polylogarithmic runtime on quasi-polynomially many processors. This relates parallel machine learning to Nick’s class of parallel decision problems and is a step towards answering a fundamental open problem about the abilities and limitations of efficient parallel learning algorithms. An empirical study on real distributed systems confirms the potential of the approaches in realistic application scenarios.

Petzka, Henning; Adilova, Linara; Kamp, Michael; Sminchisescu, Cristian

A Reparameterization-Invariant Flatness Measure for Deep Neural Networks Workshop

Science meets Engineering of Deep Learning workshop at NeurIPS, 2019.

Links | BibTeX | Tags: deep learning, flatness, generalization, learning theory, loss surface, neural networks, robustness

@workshop{petzka2019reparameterization,

title = {A Reparameterization-Invariant Flatness Measure for Deep Neural Networks},

author = {Henning Petzka and Linara Adilova and Michael Kamp and Cristian Sminchisescu},

url = {https://arxiv.org/pdf/1912.00058},

year = {2019},

date = {2019-01-01},

urldate = {2019-01-01},

booktitle = {Science meets Engineering of Deep Learning workshop at NeurIPS},

keywords = {deep learning, flatness, generalization, learning theory, loss surface, neural networks, robustness},

pubstate = {published},

tppubtype = {workshop}

}

2018

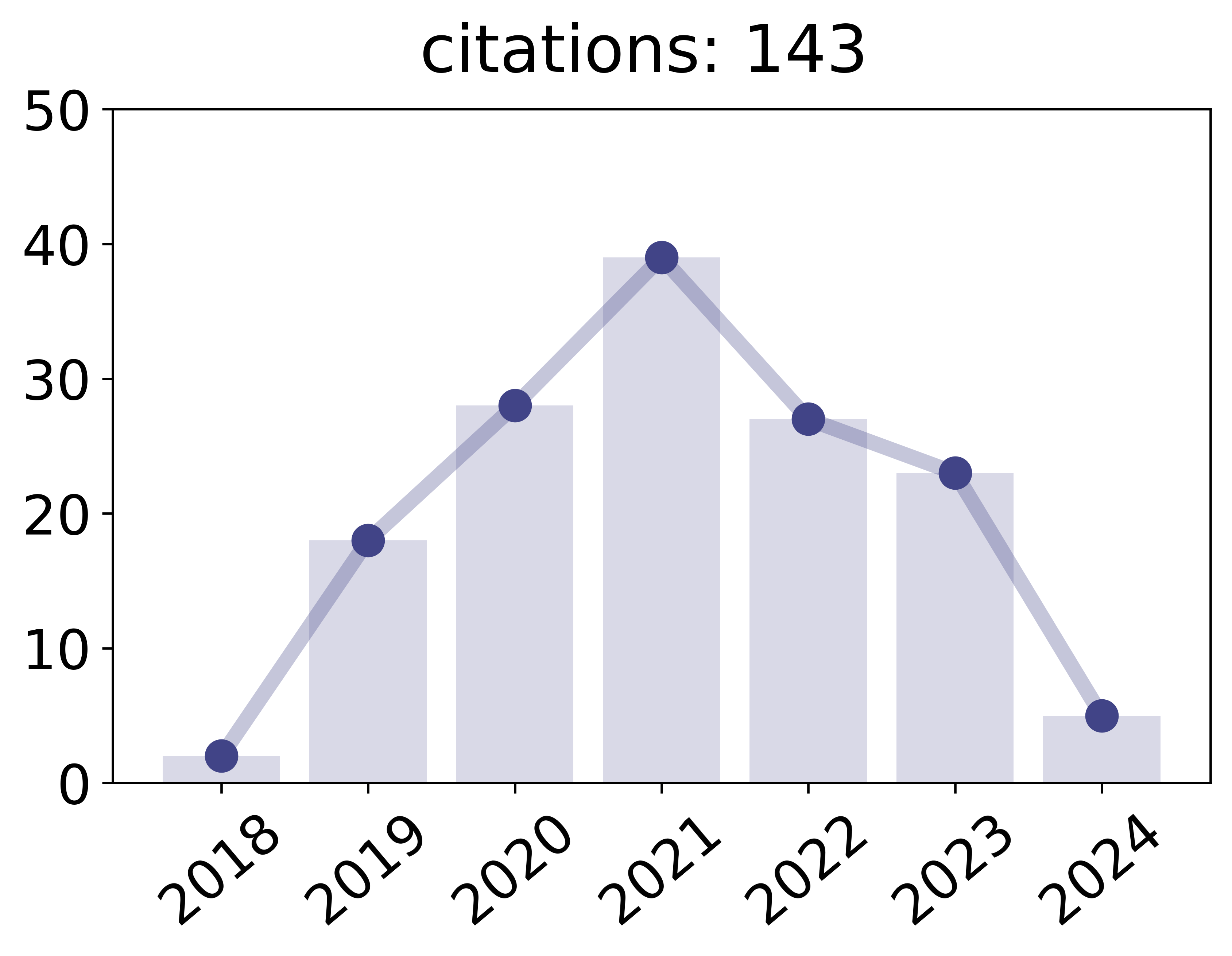

Kamp, Michael; Adilova, Linara; Sicking, Joachim; Hüger, Fabian; Schlicht, Peter; Wirtz, Tim; Wrobel, Stefan

Efficient Decentralized Deep Learning by Dynamic Model Averaging Proceedings Article

In: Machine Learning and Knowledge Discovery in Databases, Springer, 2018.

Abstract | Links | BibTeX | Tags: decentralized, deep learning, federated learning

@inproceedings{kamp2018efficient,

title = {Efficient Decentralized Deep Learning by Dynamic Model Averaging},

author = {Michael Kamp and Linara Adilova and Joachim Sicking and Fabian Hüger and Peter Schlicht and Tim Wirtz and Stefan Wrobel},

url = {http://michaelkamp.org/wp-content/uploads/2018/07/commEffDeepLearning_extended.pdf},

year = {2018},

date = {2018-09-14},

urldate = {2018-09-14},

booktitle = {Machine Learning and Knowledge Discovery in Databases},

publisher = {Springer},

abstract = {We propose an efficient protocol for decentralized training of deep neural networks from distributed data sources. The proposed protocol allows to handle different phases of model training equally well and to quickly adapt to concept drifts. This leads to a reduction of communication by an order of magnitude compared to periodically communicating state-of-the-art approaches. Moreover, we derive a communication bound that scales well with the hardness of the serialized learning problem. The reduction in communication comes at almost no cost, as the predictive performance remains virtually unchanged. Indeed, the proposed protocol retains loss bounds of periodically averaging schemes. An extensive empirical evaluation validates major improvement of the trade-off between model performance and communication which could be beneficial for numerous decentralized learning applications, such as autonomous driving, or voice recognition and image classification on mobile phones.},

keywords = {decentralized, deep learning, federated learning},

pubstate = {published},

tppubtype = {inproceedings}

}